Part 1: The Architecture Shift—From Scripts to Stateful Systems

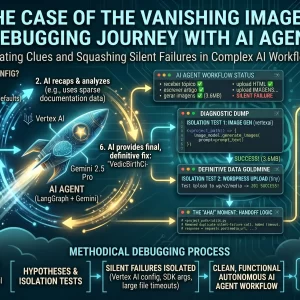

Introduction: The Limitations of “Linear” AI

For the past year, most developers have integrated Large Language Models (LLMs) using a simple linear approach: prompt in, response out. This works perfectly for chatbots, but it completely fails for complex, multi-step tasks like autonomous publishing.

A professional writer doesn’t just write once; they draft, critique, research, edit, and format.

To replicate this human workflow, we need to move from simple Python scripts to Stateful AI Agents. In this series, we document the construction of a full-stack autonomous publisher that drafts, reviews, illustrates, and posts content directly to a CMS.

This first article explores the foundational architecture required to support a persistent, multi-step AI workflow.

The Persistence Problem: Lessons from Docker

Our initial attempts to run the AI alongside our CMS revealed a critical architectural requirement: persistence.

When running a database in Docker without proper configuration, we risk losing all of the agent’s memory if the containers are destroyed or updated. A robust AI agent needs a reliable “long-term memory” to store its progress, past drafts, and critique history. We solved this by utilizing Docker Named Volumes, decoupling data storage from the container’s lifespan.

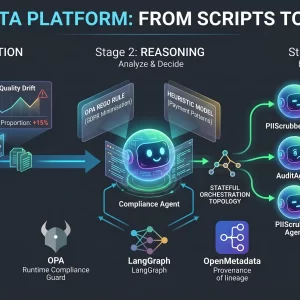

The High-Level Architecture

To support a truly autonomous workflow with human oversight, we designed a four-part microservices stack coordinated via Docker Compose:

- The Interface (Streamlit): A web dashboard for human input, monitoring, and final approval (Human-in-the-Loop).

- The Brain (LangGraph): A Python application defining the cyclic logic and state management.

- The Memory (Postgres): A database to persist the state of every active agent thread.

- The CMS (WordPress): The final destination for the generated and approved content.

Infrastructure as Code: The Docker Compose Stack

Below is the proposed/sample, refactored docker-compose.yml configuration that ties these distinct services together. We have included robust health checks to ensure the database is fully ready before the agent attempts to connect.

version: '3.8'

services:

# --- The Memory (Stateful) ---

postgres:

image: postgres:15

container_name: agent_memory

environment:

POSTGRES_USER: agent_user

POSTGRES_PASSWORD: local_password

POSTGRES_DB: agent_db

volumes:

- db_data:/var/lib/postgresql/data # <--- PERSISTENCE LOCK

healthcheck:

test: ["CMD-SHELL", "pg_isready -U agent_user -d agent_db"]

interval: 5s

timeout: 5s

retries: 5

# --- The Content Destination ---

wordpress:

image: wordpress:latest

container_name: agent_cms

ports:

- "8080:80"

volumes:

- wp_data:/var/www/html

depends_on:

- postgres

# --- The Brain (LangGraph Agent) ---

agent:

build: .

container_name: agent_brain

environment:

# Internal Docker DNS links them together

- DB_URI=postgresql://agent_user:local_password@postgres:5432/agent_db

- WP_URL=http://wordpress/wp-json/wp/v2

depends_on:

postgres:

condition: service_healthy # Wait for healthcheck success

volumes:

db_data:

wp_data:Conclusion

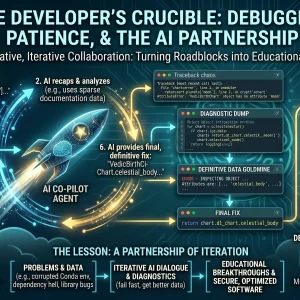

By establishing a robust infrastructure based on containerization and persistent volumes, we created the necessary foundation for a complex AI application.

The plumbing is now in place. In the next article, we will dive into the Python code that powers the agent’s “brain” and explore how to orchestrate autonomous tasks using LangGraph.

Are you building autonomous agents? What architecture challenges are you facing? Share your thoughts in the comments below.

⚠️ Disclaimer: The information provided on LearnWithNeeraj.com regarding Astrology, Numerology, and other topics is for educational and guidance purposes only.

Not Professional Advice: This content should not be used as a substitute for professional medical, legal, or financial advice. Always consult a certified professional for specific concerns.

Guest Authors: This site features articles by various contributors. The views and interpretations expressed are those of the individual authors and do not necessarily reflect the views of the website administrator.

Your destiny is in your hands. Use this information as a map, not a mandate.